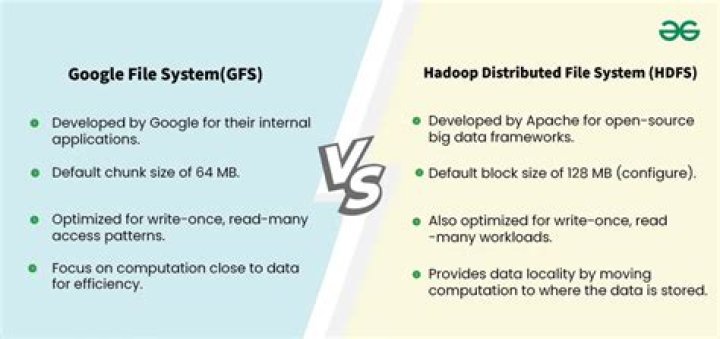

The only key difference between Hadoop and HDFS is, Hadoop is a framework that is used for storage, management, and processing of big data. On the other hand, HDFS is a part of Hadoop which provides distributed file storage of big data..

Keeping this in consideration, what is the difference between Hadoop and HDFS commands?

In a nutshell, hadoop fs is more “generic” command that allows you to interact with multiple file systems including Hadoop, whereas hdfs dfs is the command that is specific to HDFS. Note that hdfs dfs and hadoop fs commands become synonymous if the file system being used is HDFS.

Also, what is the use of HDFS in Hadoop? The Hadoop Distributed File System (HDFS) is the primary data storage system used by Hadoop applications. It employs a NameNode and DataNode architecture to implement a distributed file system that provides high-performance access to data across highly scalable Hadoop clusters.

Simply so, what is the difference between HDFS and Hive?

Key Differences between Hadoop and Hive Hadoop is a framework to process/query the Big data while Hive is an SQL Based tool that builds over Hadoop to process the data. Hive process/query all the data using HQL (Hive Query Language) it's SQL-Like Language while Hadoop can understand Map Reduce only.

What does Hdfs DFS mean?

distributed file system

Related Question Answers

What is Hadoop FS command?

The File System (FS) shell includes various shell-like commands that directly interact with the Hadoop Distributed File System (HDFS) as well as other file systems that Hadoop supports, such as Local FS, HFTP FS, S3 FS, and others.What is HDFS client?

Client in Hadoop refers to the Interface used to communicate with the Hadoop Filesystem. There are different type of Clients available with Hadoop to perform different tasks. The basic filesystem client hdfs dfs is used to connect to a Hadoop Filesystem and perform basic file related tasks.What is Fsshell?

The File System (FS) shell includes various shell-like commands that directly interact with the Hadoop Distributed File System (HDFS) as well as other file systems that Hadoop supports, such as Local FS, WebHDFS, S3 FS, and others.What is Hadoop architecture?

Hadoop Architecture. The Hadoop architecture is a package of the file system, MapReduce engine and the HDFS (Hadoop Distributed File System). The MapReduce engine can be MapReduce/MR1 or YARN/MR2. A Hadoop cluster consists of a single master and multiple slave nodes.What is core site XML in Hadoop?

The core-site. xml file informs Hadoop daemon where NameNode runs in the cluster. It contains the configuration settings for Hadoop Core such as I/O settings that are common to HDFS and MapReduce.What is the difference between Copytolocal and get commands?

-Put and -copyFromLocal is almost same command but a bit difference between both of them. -copyFromLocal this command can copy only one source ie from local file system to destination file system. copyFromLocal is similar to put command, but the source is restricted to a local file reference.How do I delete a folder in Hadoop?

You can remove the directories that held the storage location's data by either of the following methods: - Use an HDFS file manager to delete directories.

- Log into the Hadoop NameNode using the database administrator's account and use HDFS's rmr command to delete the directories.

Can hive work without Hadoop?

Hadoop is like a core, and Hive need some library from it. Update This answer is out-of-date : with Hive on Spark it is no longer necessary to have hdfs support. Hive requires hdfs and map/reduce so you will need them. But the gist of it is: hive needs hadoop and m/r so in some degree you will need to deal with it.Is Hadoop a NoSQL?

Hadoop is not a type of database, but rather a software ecosystem that allows for massively parallel computing. It is an enabler of certain types NoSQL distributed databases (such as HBase), which can allow for data to be spread across thousands of servers with little reduction in performance.Which is better Hive or Pig?

Apache Pig is 36% faster than Apache Hive for join operations on datasets. Apache Pig is 46% faster than Apache Hive for arithmetic operations. Apache Pig is 10% faster than Apache Hive for filtering 10% of the data. Apache Pig is 18% faster than Apache Hive for filtering 90% of the data.Is hive a Hadoop?

Apache Hive is a Hadoop component that is normally deployed by data analysts. It is an open-source data warehousing system, which is exclusively used to query and analyze huge datasets stored in Hadoop. The three important functionalities for which Hive is deployed are data summarization, data analysis, and data query.Why hive is used in Hadoop?

Hive is an ETL and data warehouse tool on top of Hadoop ecosystem and used for processing structured and semi structured data. Hive is a database present in Hadoop ecosystem performs DDL and DML operations, and it provides flexible query language such as HQL for better querying and processing of data.Is hive a SQL or NoSQL?

Is Hive a NoSQL database? Apache Hive offers a read-only SQL dialect, so in that sense it exposes the non standard SQL-ish interface of a relational database but an OLAP type not an OLTP type. It supports multiple sources of data, typically distributed systems in the big data space.Why HBase is faster than Hive?

Hbase is faster when compared to Hive in fetching data. Hive is used to process structured data whereas HBase since it is schema-free, can process any type of data. Hbase is highly(horizontally) scalable when compared to Hive.When should I use HBase vs Hdfs?

HDFS has a rigid architecture that does not allow changes. It doesn't facilitate dynamic storage. HBase allows for dynamic changes and can be utilized for standalone applications. HBase is ideally suited for random write and read of data that is stored in HDFS.Is hive a database?

Hive is a database present in Hadoop ecosystem performs DDL and DML operations, and it provides flexible query language such as HQL for better querying and processing of data.Where is HDFS data stored?

In HDFS data is stored in Blocks, Block is the smallest unit of data that the file system stores. Files are broken into blocks that are distributed across the cluster on the basis of replication factor.How does Hdfs work?

The way HDFS works is by having a main « NameNode » and multiple « data nodes » on a commodity hardware cluster. Data is then broken down into separate « blocks » that are distributed among the various data nodes for storage. Blocks are also replicated across nodes to reduce the likelihood of failure.How is data stored in HDFS?

On a Hadoop cluster, the data within HDFS and the MapReduce system are housed on every machine in the cluster. Data is stored in data blocks on the DataNodes. HDFS replicates those data blocks, usually 128MB in size, and distributes them so they are replicated within multiple nodes across the cluster.